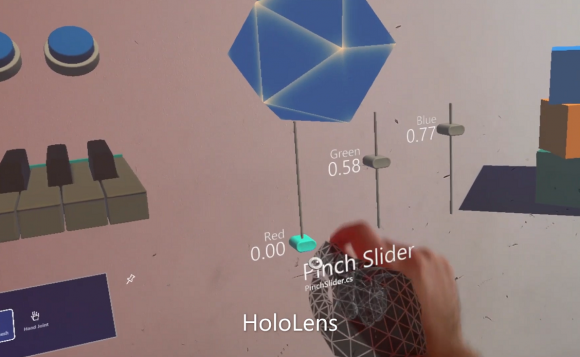

After the MRTK library has been adapted to the Oculus Quest 2 and in the ver. 30 of the Operating system we received a pass-through feature, we decided to check if Oculus 2 could be used as an alternative to expensive HoloLens 2. To validate the idea, we’ve made two applications with the same features on the Oculus Quest 2 and on the HoloLens 2. You can see the result in the video at the end of the article.

Short introduction and comparison of headsets OQ2 vs HL2

For those who want to get more information on these devices, we have prepared a small review.

Oculus Quest 2

Oculus Quest 2 is a virtual reality (VR) headset created by Oculus, a brand of Facebook. As with its predecessor, the Quest 2 is capable of running both a standalone headset with an internal, Android-based operating system, and with Oculus-compatible VR software running on a desktop computer when connected over USB or Wi-Fi.

Hardware. The Quest 2 utilizes the Qualcomm Snapdragon XR2 system-on-chip (which is part of a Snapdragon product line designed primarily for VR and augmented reality devices), with 6 GB of RAM — an increase of 2 GB over the first-generation model.

Its display is a singular fast-switch LCD panel with a 1832×1920 per eye resolution, which can run at a refresh rate of up to 120 Hz (an increase from 1440×1600 per-eye at 72 Hz). The headset includes physical interpupillary distance (IPD) adjustment at 58 mm, 63 mm and 68 mm, adjusted by physically moving the lenses into each position. This is also combined with software adjustment.

The included Oculus Touch controllers are slightly bigger, influenced by the original Oculus Rift’s controllers. Their battery life has also been increased four-fold over the controllers included with the first-generation Quest.

Hololens 2

The HoloLens 2 is a combination of waveguide and laser-based stereoscopic & full-color mixed reality smart glasses developed and manufactured jointly by Microsoft and MicroVision, Inc. in Redmond, Washington. It is the direct model successor to the pioneering Microsoft HoloLens and the technical successor to the MicroVision stereoscopic and monochromatic laser-based virtual retinal display (VRD) & helmet mounted display (HMD) prototype-in-the-running for the canceled RAH-66 Comanche stealth helicopter and the now-defunct monoscopic and monochromatic MicroVision Nomad Augmented Vision System.

Hardware. The HoloLens features an inertial measurement unit (IMU) (which includes an accelerometer, gyroscope, and a magnetometer) four “environment understanding” sensors (two on each side), an energy-efficient depth camera with a 120°×120° angle of view, a 2.4-megapixel photographic video camera, a four-microphone array, and an ambient light sensor.

The HoloLens contains an internal rechargeable battery, with average life rated at 2–3 hours of active use, or 2 weeks of standby time. The HoloLens can be operated while charging.

HoloLens features IEEE 802.11ac Wi-Fi and Bluetooth 4.1 Low Energy (LE) wireless connectivity. The headset uses Bluetooth LE to pair with the included Clicker, a thumb-sized finger-operating input device that can be used for interface scrolling and selecting. The Clicker features a clickable surface for selecting, and an orientation sensor which provides scrolling functions via tilting and panning of the unit. The Clicker features an elastic finger loop for holding the device, and a USB 2.0 micro-B plug socket for charging its internal battery.

HoloLens 2 and Oculus Quest 2 have different operating systems, provide different user experience and have different types of interaction with the system and world.

Development process

Developing applications for Oculus Quest 2 is based on the basic rules and best practices with specific oculus Quest side requirements using Unity engine. If we are talking about HoloLens 2, we have to develop an application for the Universal Windows Platform with specific restrictions and additional capabilities provided by Windows 10 Holographic operating system using the MRTK library provided by Microsoft.

Some time ago we found that the MRTK library has been adapted to the Oculus Quest 2 and we started using it in our projects. This library has a lot of prepared elements that save time for prototyping and development.

In the ver. 30 of the Operating system we received a pass-through feature from Oculus Quest 2 that allows us to step outside your view in VR to see a real-time view of your surroundings. Passthrough uses the sensors on your headset to approximate what you would see if you were able to look directly through the front of your headset and into the real world around you. Taking into account these feature possibilities, we decided to check if Oculus could be used as an alternative to expensive HoloLens 2.

To validate the idea, we’ve made two applications with the same features on the Oculus Quest 2 and on the HoloLens 2. The result you can see in the video.

The summary

Oculus Quest cannot be used as an alternative to the HoloLens for our purpose. The pass-through feature is not the same as Mix Reality. The possibility to work in the real world with Oculus Quest is limited. Also, Oculus Quest has no camera for recognition of images or objects, Oculus Quest doesn’t have lidar or high resolution cameras because Oculus Quest is a B2C product, not a B2B product.

If you have tasks that are suitable for HoloLens 2, use it. Oculus Quest 2 provides some experience of HoloLens 2 usage but these experiences are too limited to make Oculus Quest 2 an alternative to HoloLens 2.